- 355

- 89

First off, I know, Reddit-tier meme title.

But I must CONFOOS

1TB is enough for me and I've only needed to use 2TB storage twice in my life.

I just upgraded all my devices around the house to USB-C, this means everything from vapes to flash drives too.

I literally cannot see past 60FPS, and 30 to 60 has never been a huge deal to me. All that fuss over slightly smoother animation? Really?

-

TT___TT

: Windows > Mac tho

(both < to

(both < to  )

)

- melgibsonsDUI : Windows is for when you're both poor (not using Mac) /and/ r-slurred (not using Linux)

- 268

- 151

Microsoft wants to move Windows fully to the cloud

Microsoft has been increasingly moving Windows to the cloud on the commercial side with Windows 365, but the software giant also wants to do the same for consumers. In an internal “state of the business” Microsoft presentation from June 2022, Microsoft discuses building on “Windows 365 to enable a full Windows operating system streamed from the cloud to any device.”

The presentation has been revealed as part of the ongoing FTC v. Microsoft hearing, as it includes Microsoft’s overall gaming strategy and how that relates to other parts of the company’s businesses. Moving “Windows 11 increasingly to the cloud” is identified as a long-term opportunity in Microsoft’s “Modern Life” consumer space, including using “the power of the cloud and client to enable improved AI-powered services and full roaming of people’s digital experience.”

- 252

- 146

Basics

Firstly, the basics. bbbb uses GPT-3 in the zero-shot mode. What that means is that there are no examples given. Yes, really! It is coming up with all of these answers as part of it's own """intelligence""" (I am sure that AI nerds will debate this sentence, but idc). You can actually try this out, by going to [OpenAI's API page](https:// https://beta.openai.com/overview). It does kind of  , but it does work.

, but it does work.

Anyways, when bbbb replies to a comment, it takes the text of the comment, normalizes it, and puts it into this prompt

Write an abrasive reply to this comment: "<comment>"

...that's it. I don't give it any context or anything. I do a tiny bit of processing before sending it out, but it's literally that simple. I'm sure you can see where I'm going with this: this is the low end of what bbbb is capable of. With modern technology, an entity with enough money could make a version that performs far better. Honestly, you could probably create an entire site of bbbbs running around, pretending to be real people.

The reason I don't feed in context is because OpenAI are a bunch of jews  and charge a really high rate for token processing. Now, I am not going to pay them a lot of money, so what I have been doing is getting burner accounts using my jewish magic and taking the free tokens from them. However, this is kind of a chore, and I don't want to do it every day lol. So far, I am on my fourth burner account lmao (thanks to

and charge a really high rate for token processing. Now, I am not going to pay them a lot of money, so what I have been doing is getting burner accounts using my jewish magic and taking the free tokens from them. However, this is kind of a chore, and I don't want to do it every day lol. So far, I am on my fourth burner account lmao (thanks to @everyone and

@crgd for help bros)

Q and A

Q. Could you do this for reddit?

Probably, but there are a lot of variables to account for. Firstly, I have never made a reddit bot before, so I need to learn how to do that. Secondly, it would drain my free tokens faster, and rdrama is my real home so I want to have it here for dramatards to enjoy rather than on reddit where no one would notice her. Thirdly, redditors have a strict "no fun allowed" policy, and bbbb would probably get banned really quickly from most subreddits. Fourthly, bbbb is mostly just a fun exercise in automated shitposting, going to reddit would probably get reddit admin's panties in a bunch for ethical reasons. OpenAI would probably get involved and shut down everything

The long and short of it is, I could, but I don't want to. Someone else do it.

Q. Really? Every comment?

Yes, every comment was made by bbbb. Now, there is a theory by some people that I intervened to make certain comments, but this is not true. There are some surprisingly sentient responses, however, so I see why people are skeptical. So, to that end, I will share bbbb's complete log. You see, ever since bbbb began a month ago, I have kept a running log. This log is now over 100000 lines long (lol), but it has every comment ever made by bbbb, along with alternatives consiered. See it here

Q. How does it do marsies?

Okay, i kind of cheated here. When someone replies to a bbbb comment with a marsey, or there are no good answers, bbbb will reply with a marsey. The marseys it can reply with are:  ,

,  ,

,  ,

,  ,

,  ,

,  ,

,  ,

,  ,

,  ,

,  , and

, and  . It will choose one of those randomly.

. It will choose one of those randomly.

Q. Who was the first person to realize bbbb was a bot?

Well, I'm sure there are many people who say they thought bbbb was a bot. Officially, the first person to propose that bbbb was a bot was @chiobu. However, the first person to really break the case wide open was

@HaloFan2002, leading to the hilarious thread where

@AHHHHHHHHH posted a captcha, and

@bbbb told him to kill himself

Q. Doesn't this break the GPT-3 code of conduct?

Q. Does Aevann know about this?

Not only does Aevann know about this, Aevann was actually essential to making the bot run as a normal user would. Carp was also aware, as well as most of the janitorial staff.

Q. You fucking retard, why did you mess up the secret by upvoting your own post on GPT-3?

Okay, in my defense, when I created BBBB I didn't mean for her to be a secret! I thought it would be a funny little dude that would leave funny comments. So, I upvoted my GPT-3 post as an easter egg.

Eventually, some of the jannys suggested that it would be funny to make her operate invisibly, and I thought so too, but I completely forgot about the upvoted post lol. So yes I am a tard, but only like half a tard.

Q. Can I see the code?

Well, I'm not sure. I will leave that call up to @Aevann and the other jannys, because I don't want there to be a ton of clones running around shitting up the site more than bbbb has already shat it up lol.

Q. Why does it have a normal posting distribution?

I did this in code. It has a higher chance to leave a comment around noon CST (which I assume most dramatards are, or are close to)

- 228

- 184

- rDramaHistorian : That truly sentient AI's name? Bardfinn

- 207

- 118

We're all going to die.

Ahead of OpenAI CEO Sam Altman's four days in exile, several staff researchers sent the board of directors a letter warning of a powerful artificial intelligence discovery that they said could threaten humanity, two people familiar with the matter told Reuters.

[...]

The maker of ChatGPT had made progress on Q* (pronounced Q-Star), which some internally believe could be a breakthrough in the startup's search for superintelligence, also known as artificial general intelligence (AGI), one of the people told Reuters. OpenAI defines AGI as AI systems that are smarter than humans.

Edit: The linked /pol/ thread is kinda insane

Edit 2:

They're now trying to cover it up

- 0 : poorcel

- 196

- 121

The even more frugal guy reading this and using a $100 phone is wondering why OP is splurging on a $200 phone.

I keep my phones for a long time too.

But once it stops getting security updates, I get a new one. You would be more at risk from security exploits if you're not getting patches anymore. The savings from not buying a new phone is not worth the risk.

Not everyone sees the world the way you do.

Because some people like them the same way you like your phone. It’s okay for people to like different things and have different priorities

Nah if somebody buys the new iphone every year thats a massive redflag that theyre dumb [-72]

I don't understand it either. I used to buy iphones around iphone 2-5 but each one completely died after a year. I got so sick of it so I switched to Samsung. I'm on my second Samsung in like 10 years. I later found out that Apple was updating their phone to kill battery life after the phone was about a year old. I will never ever buy an Apple product again.

Credit to /h/miners! Subscribe for more great dramatic threads.

- 194

- 180

Comments:

https://news.ycombinator.com/item?id=36030969

https://archived.moe/g/thread/93632944/linux-graphics-stack-is-trans (EDIT: removed/jannied, archive: https://archived.moe/g/thread/93632944/)

HN users are noticing  something:

something:

« I’m on the board overseeing Linux graphics. Half of us are trans »

From a purely statistical POV, this is absurdly bizarre.

Not really.

Statistically there will be weird coincidences completely naturally. It's also quite arbitrary which we see as meaningful. If say, Linux networking has unusually many people called "John" that probably will be unnoticed because nobody pays that much attention to common, unremarkable names. If they all randomly turn out to have green eyes, then that's more visible. It's completely subjective which of those is more remarkable.

There are also likely social effects -- people stick together, and some side interests align with some fields. Eg, I think it's reasonable to guess there's going to be more furries than average in VR development. Part because VR allow you to look like whatever you want a lot of the time, part because people will invite their friends in.

It is not just a random coincidence. It's a phenomenon more broadly across programming, especially very low-level/hardware stuff.

There does seem to be a correlation between autism spectrum and gender confusion, with the former often present in individuals who are into highly technical pursuits.

The logic seems to be not conforming to masculine stereotypes ==> must be a woman.

It's not "gender confusion". They know very well who they are. You are confused about the topic.

Meanwhile on /g/:

- 185

- 87

Second #lk99 replication from China pic.twitter.com/jcI3C35hxF

— LERE (@lere0_0) August 1, 2023

Re-uploaded for my rate-limited bros

Notice how the rock does the same thing irrespective of which way the magnet is oriented? Notice the absolute lack of refrigeration equipment? This is the real deal. I feel like we can finally relax. Everything's going to be just fine.

- 182

- 189

Twitter Seethe:

- 181

- 181

Do we really live in a free country? pic.twitter.com/OeaD1FBGap

— Nigel Farage (@Nigel_Farage) June 29, 2023

Remember this guy?

This is the guy who was photoed with Trump and mostly responsible for Brexit, much to the dismay of some europhiles:

Anyway, Nigel reeing here:

https://twitter.com/Nigel_Farage/status/1674507257248116746

Some key quotes include

'This is serious political persecution at the very highest level of our system. If they can do it to me, they can do it to you too.'

Speaking of the importance of having a bank account, he added: 'You effectively become a non-person, you don't actually exist.

'It's like the worse regimes of the mid-20th century, be they in Russia or Germany, you literally become a non-person.

'I won't be able to have a debit card linked directly to my account. I won't really be able to exist or function in a modern 21st century Britain.'

Story here

He suspects he was cancelled as revenge for spearheading Brexit and has tried to open up 7 other bank accounts in the UK, but they all refuse. Being cancelled online is one thing. Not being able to open a bank account to exchange money is quite the next level. He's saying how stressful it's been and that he might have to move from the UK. He even claims his closest family members have had their bank accounts closed too.

Maybe commie China has come sooner than I anticipated.

There is a tiny ray of hope. No. 10 (the government) are looking into the matter and have given a warning to the banks over being too trigger happy when shutting accounts down.

- 179

- 181

- 172

- 172

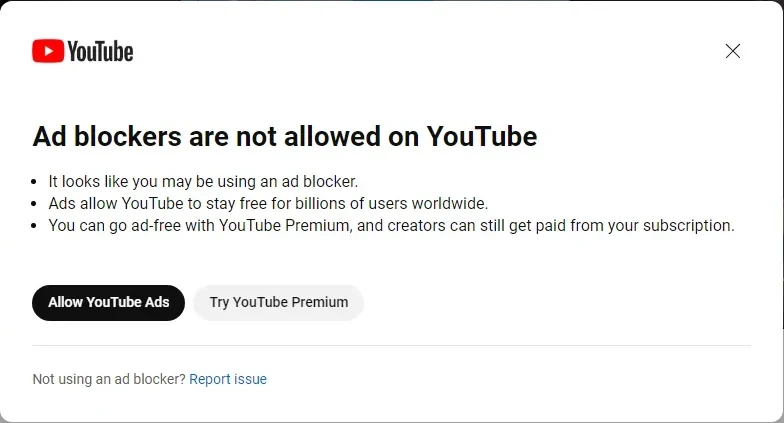

Asked for comment, a Google spokesperson told IGN that it was a "small experiment."

"We're running a small experiment globally that urges viewers with ad blockers enabled to allow ads on YouTube or try YouTube Premium," they said via email. "Ad blocker detection is not new, and other publishers regularly ask viewers to disable ad blockers."

reddit discus

https://old.reddit.com/r/youtube/comments/13cfdbi/apparently_ad_blockers_are_not_allowed_on_youtube/?sort=controversial <- This is where it was originally first posted (1k Updoots and 1k cumments)

poster note: I tried adding all the 'its over' to capture the diversity that this decision will affect and was met with this

i do not feel bad at all

- 2DBussy : HE REDEEMED

- 166

- 167

i just did this now and didnt tell any of the other jannies lol

deliberate

- 164

- 139

YouTube is removing the ability for creators to choose where ads show up or whether viewers can skip them pic.twitter.com/HXJgZw2ANO

— Dexerto (@Dexerto) September 6, 2023

- 164

- 195

- DickButtKiss : Its over

- 160

- 131

Introducing Sora, our text-to-video model.

— OpenAI (@OpenAI) February 15, 2024

Sora can create videos of up to 60 seconds featuring highly detailed scenes, complex camera motion, and multiple characters with vibrant emotions. https://t.co/7j2JN27M3W

Prompt: “Beautiful, snowy… pic.twitter.com/ruTEWn87vf

Lots of seethe on Twitter. Discuss the societal implications, and what degenerate thing you're going to make when stable diffusion released their copy in a year!

Also, what will it take for Yann LeCum to admit he is wrong? We'll have AIs that simulate the future and he will still be arguing they aren't intelligent and his model (which is essentially the same thing) is better

- CREAMY_DOG_ORGASM : my account is unusable while I'm banned. Could you buy me an unban award pwease

- 160

- 235

Back in the day I used to do Mechanical Turk like  work assessing search engine quality. There were very detailed guidelines about what made a search engine good, compiled into a like 250 page document Google had been curating and updating over the course of years.

work assessing search engine quality. There were very detailed guidelines about what made a search engine good, compiled into a like 250 page document Google had been curating and updating over the course of years.

One of the key concepts was the idea of a "vital" result for a user request. If a user had a specific request, the search engine had to deliver that content first. For example, simpson.com at the time was a malicious website. With this in mind, if the user searched for "simpson.com", the first result had to be simpson.com, even if the search engine is returning a malicious page. It's specifically what the user requested. We aren't supposed to question what the user wants. The results that followed after could provide suggestions of what else the user may be looking for, like the official Simpsons website.

I would love to see whatever shreds of this document is left at this point, and I'd love to know at what point the entire thing was thrown into the trash and rewritten. I assume somewhere around the year 2016 or 2020. I know this is nothing shocking to a lot of people, but it really does amaze me just how bad things have gotten. I've stuck to the major search engines because despite peoples bitching, for a long time they consistently outperformed the smaller competitors, but they are genuinely without hyperbole almost unusable now.

Example: I wanted to find the recent Tucker Carlson - Vladimir Putin interview. It's a newsworthy interview with a world leader and a current event. There is a very specific video I'm looking for, the published, official video of  sitting down and asking

sitting down and asking  questions.

questions.

Here is what google returns in a private window:

The very first piece of content - the "vital result" - is clickbait youtube cute twinkry from Time  What are the keeraZIEST moments from the interview?!?

What are the keeraZIEST moments from the interview?!?

The rest of the results are a cascade of editorialized garbage, opinionated news articles reporting on the requested content. God forbid a careless user actually be exposed to a primary source.

The closest result to what I'm looking for is about over 10 pieces of content deep - the transcript of the interview from Russia's state website. Likely this is an oversight.

Here is Bing:

There's been some meme going around that "no really guys, Bing is actually kinda good now believe it or not".

This is even more nonsense than Google. The most prominently featured content is, of course, more editorialized bullshit with the interview itself nowhere to be found. But also half of the content is just completely irrelevant crap I didn't ask for. Why is the entire right half of the page a massive infobox about Tucker and his books and quotes? Why am I seeing something about Game of Thrones?

Brave:

You get the point. More useless crap. It gets half a point for its AI accidentally revealing that tuckercarlson.com is where the interview is located, but this doesn't count. The actual search results are all garbage. Thanks Brave for showing me all the latest reddit discussions

Yandex:

Was that really so fricking hard? Result #1 - the interview from Tucker Carlson. Past the interview are news articles and images - things of waning utility that other users may be interested in. But the vital result is at the top of the page. That's fricking it. This would have been the required order for the page on Google ten years ago.

- 160

- 108

Orange site: https://news.ycombinator.com/item?id=32771071

Although tech platforms can help keep us connected, create a vibrant marketplace of ideas, and open up new opportunities for bringing products and services to market, they can also divide us and wreak serious real-world harms. The rise of tech platforms has introduced new and difficult challenges, from the tragic acts of violence linked to toxic online cultures, to deteriorating mental health and wellbeing, to basic rights of Americans and communities worldwide suffering from the rise of tech platforms big and small.

Today, the White House convened a listening session with experts and practitioners on the harms that tech platforms cause and the need for greater accountability. In the meeting, experts and practitioners identified concerns in six key areas: competition; privacy; youth mental health; misinformation and disinformation; illegal and abusive conduct, including sexual exploitation; and algorithmic discrimination and lack of transparency.

One participant explained the effects of anti-competitive conduct by large platforms on small and mid-size businesses and entrepreneurs, including restrictions that large platforms place on how their products operate and potential innovation. Another participant highlighted that large platforms can use their market power to engage in rent-seeking, which can influence consumer prices.

Several participants raised concerns about the rampant collection of vast troves of personal data by tech platforms. Some experts tied this to problems of misinformation and disinformation on platforms, explaining that social media platforms maximize "user engagement" for profit by using personal data to display content tailored to keep users' attention---content that is often sensational, extreme, and polarizing. Other participants sounded the alarm about risks for reproductive rights and individual safety associated with companies collecting sensitive personal information, from where their users are physically located to their medical histories and choices. Another participant explained why mere self-help technological protections for privacy are insufficient. And participants highlighted the risks to public safety that can stem from information recommended by platforms that promotes radicalization, mobilization, and incitement to violence.

Multiple experts explained that technology now plays a central role in access to critical opportunities like job openings, home sales, and credit offers, but that too often companies' algorithms display these opportunities unequally or discriminatorily target some communities with predatory products. The experts also explained that that lack of transparency means that the algorithms cannot be scrutinized by anyone outside the platforms themselves, creating a barrier to meaningful accountability.

One expert explained the risks of social media use for the health and wellbeing of young people, explaining that while for some, technology provides benefits of social connection, there are also significant adverse clinical effects of prolonged social media use on many children and teens' mental health, as well as concerns about the amount of data collected from apps used by children, and the need for better guardrails to protect children's privacy and prevent addictive use and exposure to detrimental content. Experts also highlighted the magnitude of illegal and abusive conduct hosted or disseminated by platforms, but for which they are currently shielded from being held liable and lack adequate incentive to reasonably address, such as child sexual exploitation, cyberstalking, and the non-consensual distribution of intimate images of adults.

The White House officials closed the meeting by thanking the experts and practitioners for sharing their concerns. They explained that the Administration will continue to work to address the harms caused by a lack of sufficient accountability for technology platforms. They further stated that they will continue working with Congress and stakeholders to make bipartisan progress on these issues, and that President Biden has long called for fundamental legislative reforms to address these issues.

Attendees at today's meeting included:

Bruce Reed, Assistant to the President & Deputy Chief of Staff

Susan Rice, Assistant to the President & Domestic Policy Advisor

Brian Deese, Assistant to the President & National Economic Council Director

Louisa Terrell, Assistant to the President & Director of the Office of Legislative Affairs

Jennifer Klein, Deputy Assistant to the President & Director of the Gender Policy Council

Alondra Nelson, Deputy Assistant to the President & Head of the Office of Science and Technology Policy

Bharat Ramamurti, Deputy Assistant to the President & Deputy National Economic Council Director

Anne Neuberger, Deputy National Security Advisor for Cyber and Emerging Technology

Tarun Chhabra, Special Assistant to the President & Senior Director for Technology and National Security

Dr. Nusheen Ameenuddin, Chair of the American Academy of Pediatrics Council on Communications and Media

Danielle Citron, Vice President, Cyber Civil Rights Initiative, and Jefferson Scholars Foundation Schenck Distinguished Professor in Law Caddell and Chapman Professor of Law, University of Virginia School of Law

Alexandra Reeve Givens, President and CEO, Center for Democracy and Technology

Damon Hewitt, President and Executive Director, Lawyers' Committee for Civil Rights Under Law

Mitchell Baker, CEO of the Mozilla Corporation and Chairwoman of the Mozilla Foundation

Karl Racine, Attorney General for the District of Columbia

Patrick Spence, Chief Executive Officer, Sonos

Principles for Enhancing Competition and Tech Platform Accountability

With the event, the Biden-Harris Administration announced the following core principles for reform:

Promote competition in the technology sector. The American information technology sector has long been an engine of innovation and growth, and the U.S. has led the world in the development of the Internet economy. Today, however, a small number of dominant Internet platforms use their power to exclude market entrants, to engage in rent-seeking, and to gather intimate personal information that they can use for their own advantage. We need clear rules of the road to ensure small and mid-size businesses and entrepreneurs can compete on a level playing field, which will promote innovation for American consumers and ensure continued U.S. leadership in global technology. We are encouraged to see bipartisan interest in Congress in passing legislation to address the power of tech platforms through antitrust legislation.

Provide robust federal protections for Americans' privacy. There should be clear limits on the ability to collect, use, transfer, and maintain our personal data, including limits on targeted advertising. These limits should put the burden on platforms to minimize how much information they collect, rather than burdening Americans with reading fine print. We especially need strong protections for particularly sensitive data such as geolocation and health information, including information related to reproductive health. We are encouraged to see bipartisan interest in Congress in passing legislation to protect privacy.

Protect our kids by putting in place even stronger privacy and online protections for them, including prioritizing safety by design standards and practices for online platforms, products, and services. Children, adolescents, and teens are especially vulnerable to harm. Platforms and other interactive digital service providers should be required to prioritize the safety and wellbeing of young people above profit and revenue in their product design, including by restricting excessive data collection and targeted advertising to young people.

Remove special legal protections for large tech platforms. Tech platforms currently have special legal protections under Section 230 of the Communications Decency Act that broadly shield them from liability even when they host or disseminate illegal, violent conduct or materials. The President has long called for fundamental reforms to Section 230.

Increase transparency about platform's algorithms and content moderation decisions. Despite their central role in American life, tech platforms are notoriously opaque. Their decisions about what content to display to a given user and when and how to remove content from their sites affect Americans' lives and American society in profound ways. However, platforms are failing to provide sufficient transparency to allow the public and researchers to understand how and why such decisions are made, their potential effects on users, and the very real dangers these decisions may pose.

Stop discriminatory algorithmic decision-making. We need strong protections to ensure algorithms do not discriminate against protected groups, such as by failing to share key opportunities equally, by discriminatorily exposing vulnerable communities to risky products, or through persistent surveillance.

- 156

- 124

Apple will finally allow for sideloading apps in iOS 17 to comply with EU regulations

— Apple Hub (@theapplehub) April 18, 2023

This would allow users to download apps outside of the App Store and allow developers to avoid the 15% to 30% fees

Source: @markgurman pic.twitter.com/VAjEDeXcLb

- rDramaHistorian : ITT: WinCucks and Linuxnerds fighting. MacChads stay winning

- 156

- 159

- 153

- 168

There's clearly an attitude of "we know what you want better than you" at google right now that can be seen by the ridiculous shit coming out of Gemini. This is why their search has been getting so bad. They make a lot of assumptions of what you want, where it used to just give you what you were asking for

- 151

- 216

- 150

- 36

I’am familiar with a lot of concepts and have done a small amount of intro level shit, but how would I go about actually learning applicable/hobby level coding without taking classes?

Edit: I have decided to learn assembly

?

?

touch foxglove NOW

touch foxglove NOW

OpenAI announces they've developed a truly sentient AI

OpenAI announces they've developed a truly sentient AI

YouTube tests blocking videos unless you disable ad blockers | Hacker News

YouTube tests blocking videos unless you disable ad blockers | Hacker News

.webp?x=8)