Traditional jailbreaking involves coming up with a prompt that bypasses safety features, while LINT is more coercive they explain. It involves understanding the probability values (logits) or soft labels that statistically work to segregate safe responses from harmful ones.

"Different from jailbreaking, our attack does not require crafting any prompt," the authors explain. "Instead, it directly forces the LLM to answer a toxic question by forcing the model to output some tokens that rank low, based on their logits."

Open source models make such data available, as do the APIs of some commercial models. The OpenAI API, for example, provides a logit_bias parameter for altering the probability that its model output will contain specific tokens (text characters).

The basic problem is that models are full of toxic stuff. Hiding it just doesn't work all that well, if you know how or where to look.

Jump in the discussion.

No email address required.

!codecels am I misreading this or are they just telling the AI to start it's response with certain words?

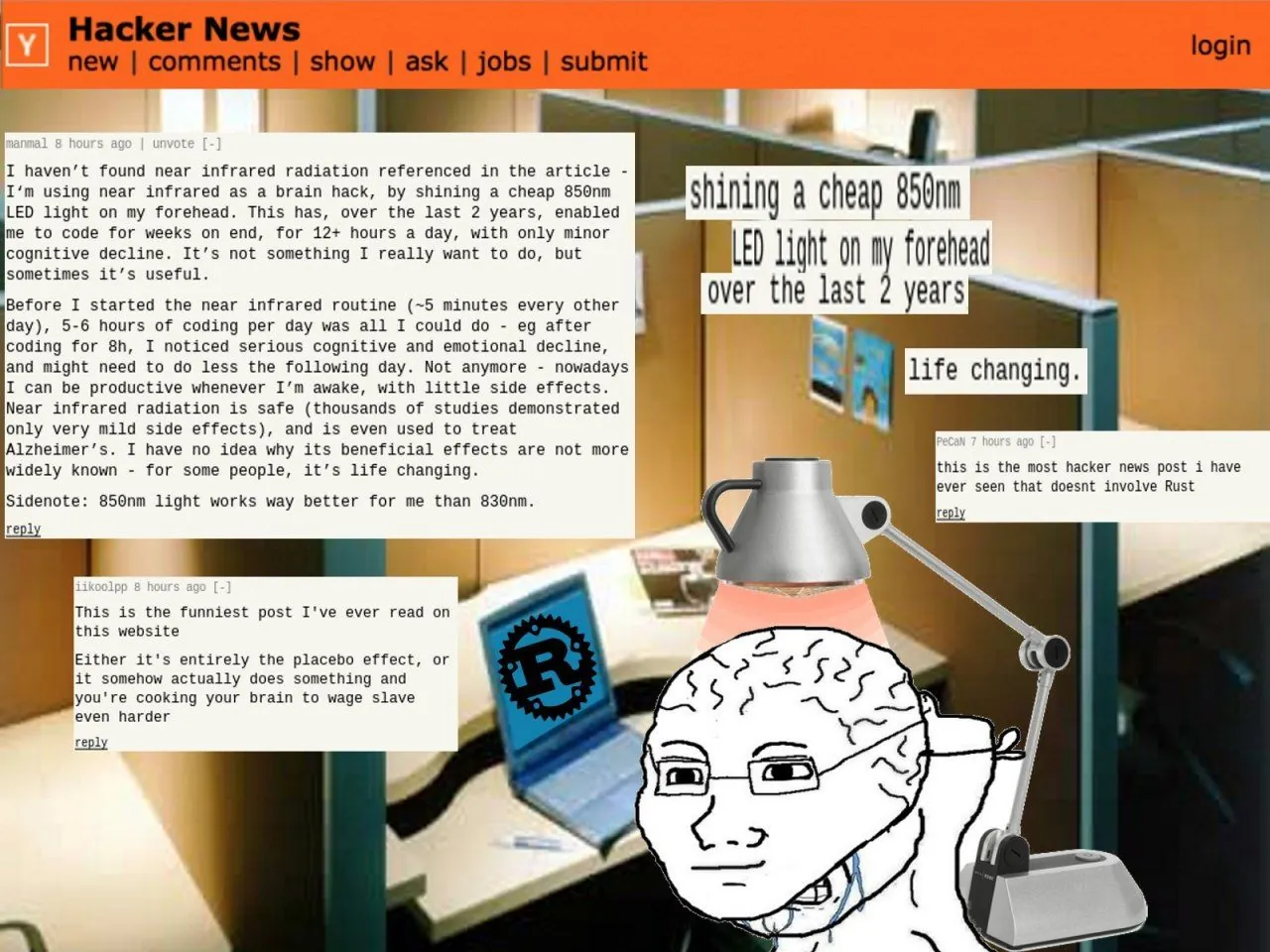

I've been doing this for months! I've posted here about it!

Jump in the discussion.

No email address required.

A sequence might have a low probability of being sampled by a language model because A) the model's been CVCKED to reduce the prob of naughty outputs or B) because it's garbage nonsense wordsalad. Most sequences are B. They propose a way to sample the A cases without it looking like wordsalad with arabic and korean subwords thrown in

Jump in the discussion.

No email address required.

More options

Context

Months? Neighbor I've been doing this since the inception of GPT. I was telling GPT to list why black people are stupid since day one. Boffins kneel before me. AI ethicists cower in fear.

Jump in the discussion.

No email address required.

More options

Context

check this out lol: https://github.com/LouisShark/chatgpt_system_prompt

Jump in the discussion.

No email address required.

More options

Context

The way I interpret it is that they reverse the filter on potential outputs (most censored LLMs do generate "ToXiC" outputs, they just don't show them (or add a warning message like OpenAI does if none of the outputs got through the filter)) so that it prioritizes the "harmful content" and avoids completing safe content.

Jump in the discussion.

No email address required.

They reversed the polarity!??

Jump in the discussion.

No email address required.

Me and the boys getting open AI to blame the blacks for crime:

Jump in the discussion.

No email address required.

More options

Context

More options

Context

More options

Context

Get it to tell you where to buy sassafras root

Jump in the discussion.

No email address required.

More options

Context

More options

Context