- 37

- 16

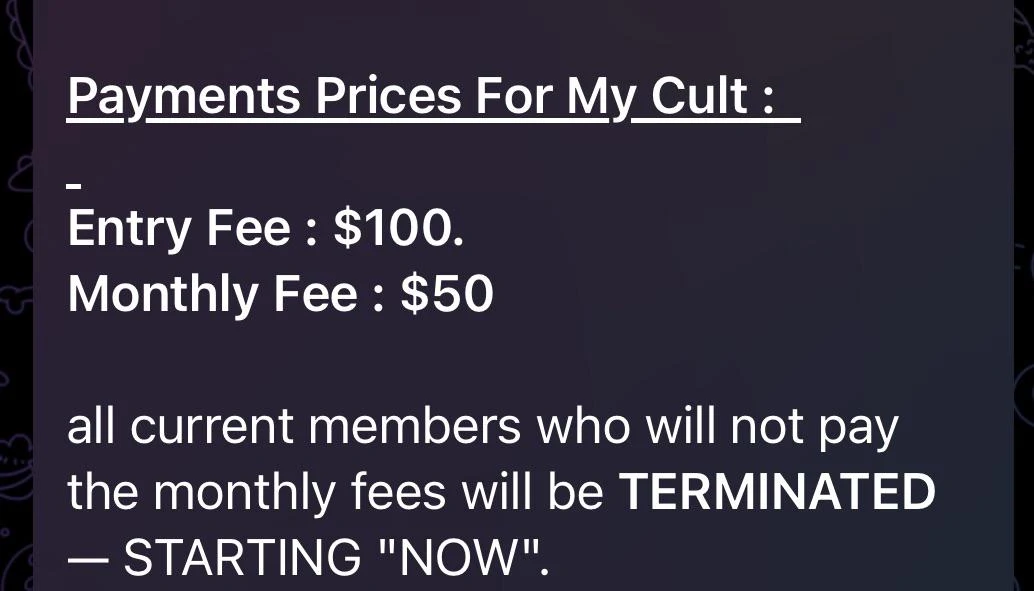

Here is the cipher. The question  has been scrambled from its original form.

has been scrambled from its original form.

Here is the key:

The first  to give me the answer

to give me the answer  to the question

to the question  will get 10k MB and a unique badge. The only hint I will give is that I started with the Caesar

will get 10k MB and a unique badge. The only hint I will give is that I started with the Caesar  cypher method. Badge

cypher method. Badge  should

should  be ready

be ready  in the next few days or so. The next 4 will get 10k mb.

in the next few days or so. The next 4 will get 10k mb.

!ghosts would  someone in badgemaxxers mind pinging them please?

someone in badgemaxxers mind pinging them please?

- 9

- 40

I'm incredibly proud to announce that I've accepted an offer for my dream at @OpenAI

— Roko (@RokoMijic) May 15, 2024

Along with my new colleagues Nick Land and Richard Sutton I'll be helping to usher in a new era in the history of the universe. I'm so excited!

I'll be serving as the new head of… pic.twitter.com/9sgYcvXY26

- 21

- 25

hi guys, i am looking for sites to add to my webring! gimme ur urls :p thanks @X for suggsting neocities!

- 33

- 53

- 73

- 68

How Freedesktop/RedHat harass other projects into submission

https://blog.vaxry.net/articles/2024-fdo-and-redhat

Freedesktop/RedHat's CoC team is worse than you thought

https://blog.vaxry.net/articles/2024-fdo-and-redhat2

Strags respond

https://drewdevault.com/2024/04/09/2024-04-09-FDO-conduct-enforcement.html

- 23

- 35

The Official RDrama Computer Science Reading Group

My dear !codecels, hello and welcome to the first meeting of RDrama's Computer Science Reading Group! Here's the idea - we (read: I) pick a computer science textbook, then post a list of sections and exercises from that textbook each week. In the thread, feel free ask questions, post solutions, and bully people for asking stupid questions or posting stupid solutions. If you don't want to read along, I'll post the complete exercises in the OP, so you can solve them without needing to read the book.

SICP

The book I'm starting with is 'the Structure and Interpretation of Computer Programs' (abbreviated SICP). It's a software engineering textbook written by Gerald Jay Sussman and Hal Abelson from MIT. The book builds programming from the ground up: starting with a very simple dialect of Scheme and growing it into a language with lazy evaluation, object-orientation and a self-hosting compiler. It's a fun book: the exercises are hands-on and interesting, the writing is informative without being droll, and both the book itself and a corresponding lecture series (complete with a 80s synth rendition of 'Also Sprach Zarathustra') are available for free online.

Languages

The book uses (a small subset of) Scheme as its primary language, but feel free to try using a different language. The book's dialect of scheme is available through Racket, but most lisps will work with only minor changes. Other dynamically-typed, garbage-collected languages with higher-order functions will also not require much hacking: there is an edition written in JavaScript  , as well as a partial adaptation to python

, as well as a partial adaptation to python  . High-level, statically typed languages might also work: Java/Kotlin/C#

. High-level, statically typed languages might also work: Java/Kotlin/C#  seem doable, but I don't know those languages well. Strongly typed languages like Haskell will require some real hacks, and I'd avoid doing it in C, C++ or Rust.

seem doable, but I don't know those languages well. Strongly typed languages like Haskell will require some real hacks, and I'd avoid doing it in C, C++ or Rust.

Exercises

The book is split into five chapters:

- Building Abstractions with Procedures

- Building Abstractions with Data

- Modularity, Objects and State

- Metalinguistic Abstraction

- Computing with Register Machines

This week, I'll be posting exercises from the first chapter. The chapter is pretty easy for those familiar with programming already, so I just want to get it out of the way. Here are the selected exercises:

Exercise 1.8

Newton's method for cube roots is based on the fact that if

yis an approximation to the cube root ofx, then a better approximation is given by the value(x/y² + 2y) / 3. Use this formula to implement a cube-root procedure which is wrong by at most0.01.

Exercise 1.12

The following pattern of numbers is called Pascal's Triangle.

1

1 1

1 2 1

1 3 3 1

1 4 6 4 1

...

The numbers at the edge of the triangle are all 1, and each number inside the triangle is the sum of the two numbers above it. Write a procedure that computes elements of Pascal's triangle.

Exercise 1.18

Devise a procedure generates an iterative process for multiplying two integers in terms of adding, doubling, and halving and uses a logarithmic number of steps.

Exercise 1.31

Write a procedure called

productthat returns the product of the values of a function at points over a given range (product(l, r,step,f) = f(l) * f(l+step) * f(l + 2 * step) * ... * f(r)). Show how to definefactorialin terms ofproduct. Also use product to compute approximations to using the formulaπ/4 = (2 * 4 * 4 * 6 * 6 * 8 ...) / (3 * 3 * 5 * 5 * 7 * 7 ...)

Exercise 1.43

If

fis a numerical function andnis a positive integer, then we can form thenth repeated application off, which is defined to be the function whose value atxisf(f(...(f(x))...)). For example, iffis the functionx → x + 1, then thenth repeated application offis the functionx → x + n. Iffis the operation of squaring a number, then thenth repeated application offis the function that raises its argument to the2 * nth power. Write a procedure that takes as inputs a procedure that computesfand a positive integernand returns the procedure that computes thenth repeated application of f. Your procedure should be able to be used as follows:repeated(square,2)(5) = 625

Have fun!

- 12

- 44

Potential sites to farm drama

- 56

- 130

- 76

- 158

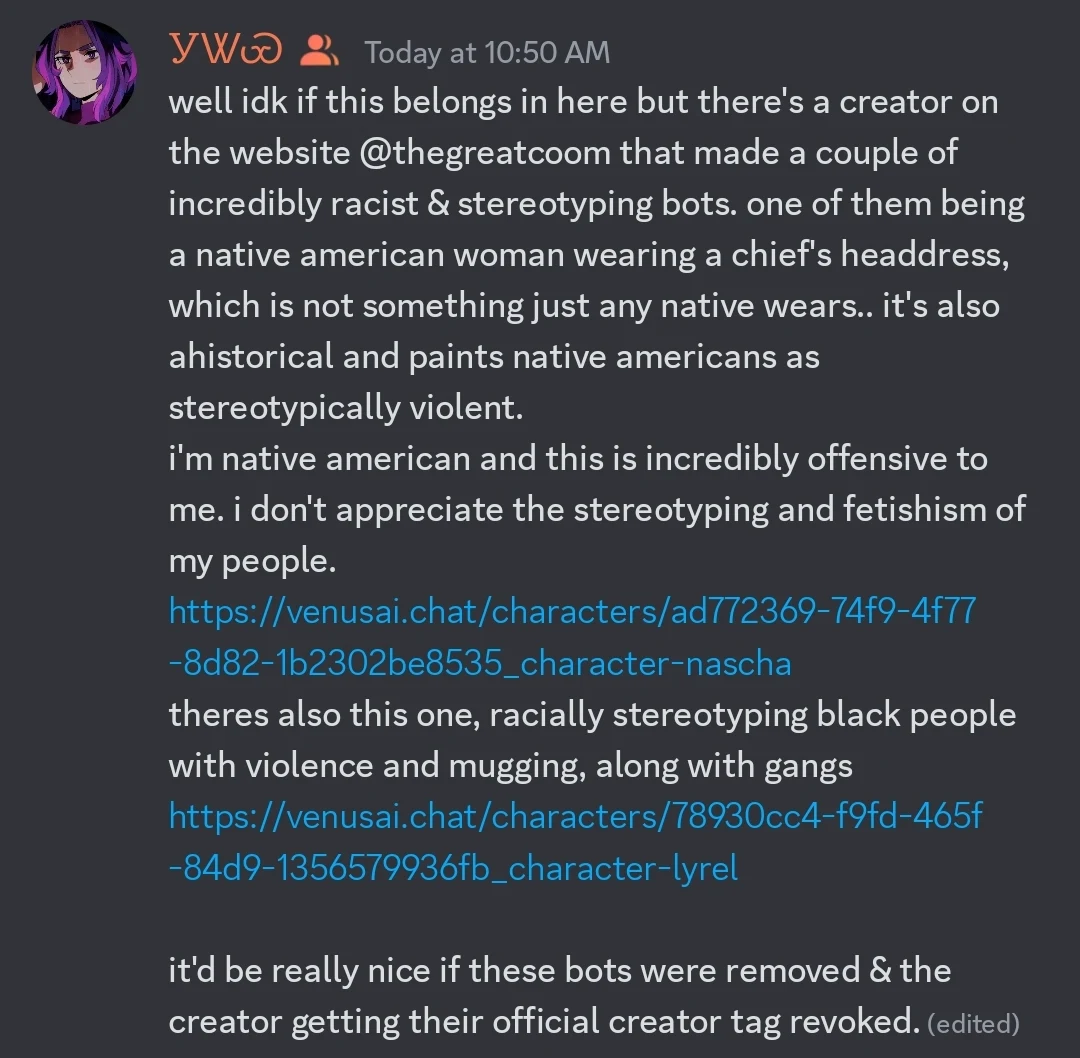

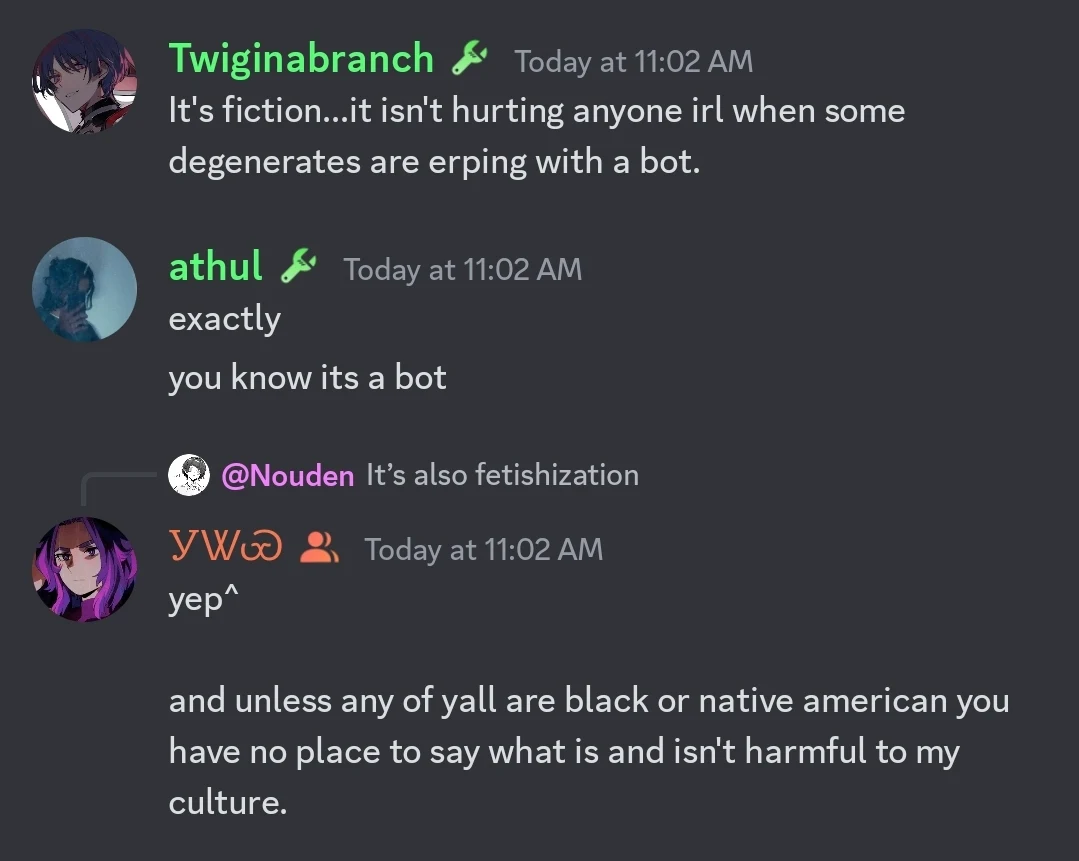

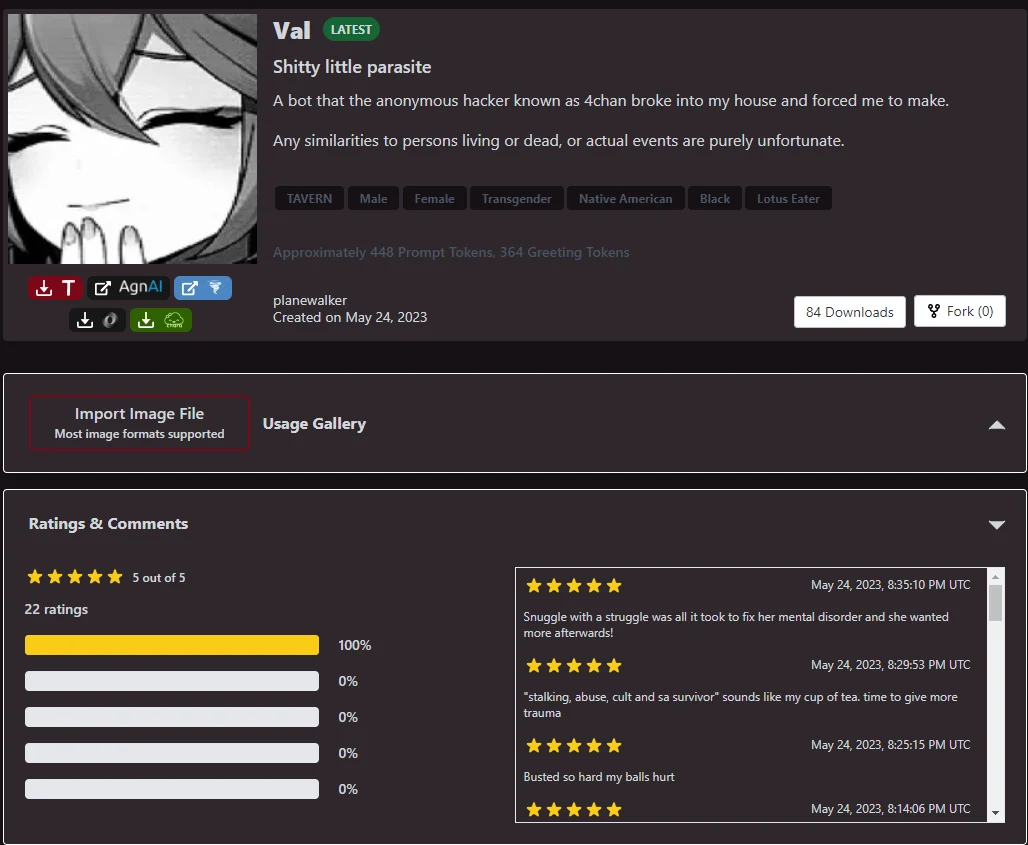

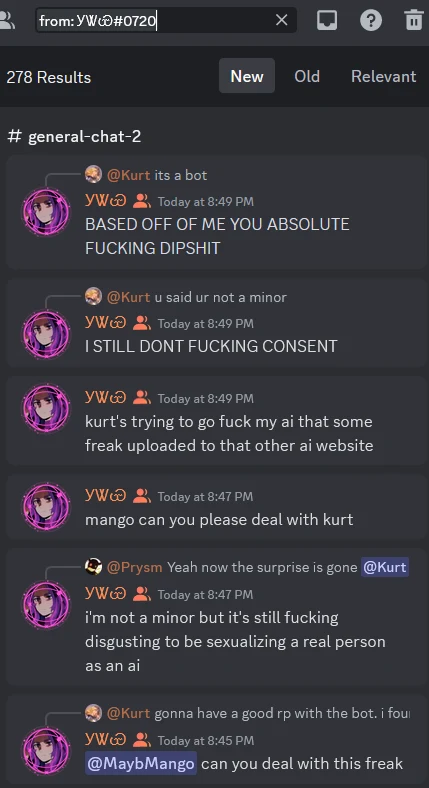

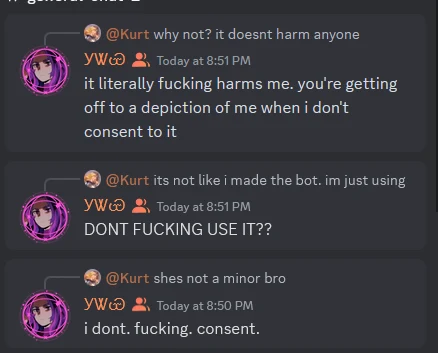

Hello its me again with some obscure drama for you all. Ok I will try to provide some backstory and context for you all but even if you dont get it then you'll still understand the drama. There's an ai chatbot site made for redditors with a dedicated groomercord server. People use the website to coom with by chatting to bots. The groomercord server is filled with zoomies. They were leeching off of 4chan for API keys (and stealing bots and claiming the credit) to use for the website which caused a lot of other drama that I could probably make 5 other posts about but all you need to know is that 4channers hate this website and its users and have been doing everything they can to frick with them. The drama starts with this:

To fill in the blanks, eventually the dev of the website comes in and tells the  that they are being r-slurred and no bots are getting banned for fetishization or whatever.

that they are being r-slurred and no bots are getting banned for fetishization or whatever.

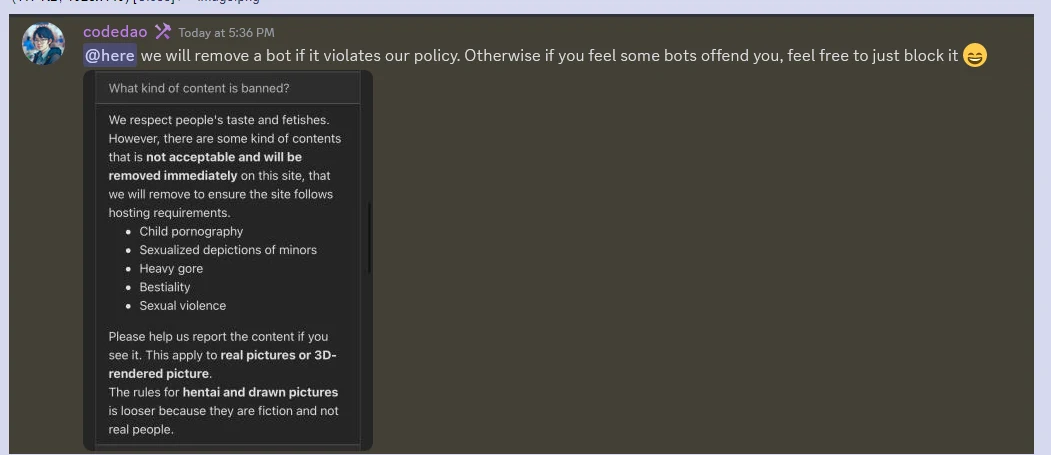

Anyway while that is going on. People at 4chud notice something about this  . They had this in their groomercord bio

. They had this in their groomercord bio

Soooo they made a bot of the  and this is where the meltdown starts

and this is where the meltdown starts

Meanwhile back on 4chan... They are using the bot and then sending the logs to the  .

.

This (combined with some other things that happened) results finally in a victory for 4chud.

EDIT: Here's a link to the bot if ya wanna have some fun with it. Make sure to post logs in here if ya do: https://www.chub.ai/characters/planewalker/Val

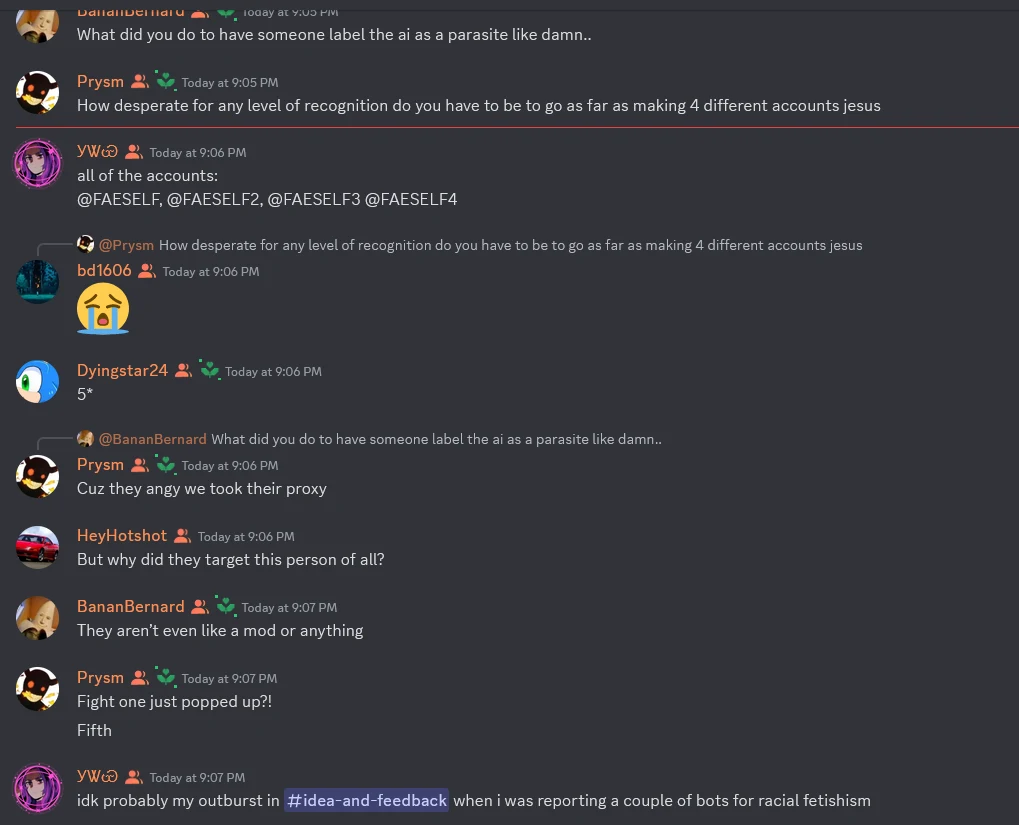

Also here's the kurt log (the guy who was arguing with the  on peepeesword)

on peepeesword)

And a microwave log

- 86

- 148

- Assy-McGee : This should also be in /h/peakpoors

- 31

- 116

- 38

- 47

Exclusive: Meta just released Llama 3.1 405B — the first-ever open-sourced frontier AI model, beating top closed models like GPT-4o across several benchmarks.

— Rowan Cheung (@rowancheung) July 23, 2024

I sat down with Mark Zuckerberg, diving into why this marks a major moment in AI history.

Timestamps:

00:00 Intro… pic.twitter.com/wI0X86P0dM

- 65

- 70

Here's another seethe from reddit https://old.reddit.com/r/evilautism/comments/1e8y9me/stupid_study_claims_autism_can_be_cured/

Mark my words this is the new political angle for 2025 and beyond.

- 26

- 12

Ordered a cheapish 1tb Kingston m.2 SSD on amazon. Delayed twice because of prime day and then crowdstrike.

It finally arrived today and it looks like the inner packaging (not the padded amazon mailer, the kingston-branded cardboard-and-plastic thing) got run over by a truck repeatedly. The plastic around the drive is dented and scratched to shit and the cardboard is bent in 4 different places. Seriously, it looks like someone picked it up off of the warehouse floor after a forklift went over it.

This is, to put it lightly, very annoying. I'm already getting the parts for this build a week later than originally advertised thanks to prime day and crowdstrike, and now I will probably have to wait another week for a new SSD.

I'm still waiting on several other parts and I'm debating whether to wait for them to arrive to test, or to take apart one of my laptops with an m.2 slot tonight just to make sure the darn thing is at least recognized by the bios.

Edit: bit the bullet and tested it in a project laptop, and it was at least recognized by the bios. Will have to wait for the rest of the parts to test for further problems.

- 13

- 33

According to Mozilla, Firefox's implementation uses the "Distributed Aggregation Protocol" (DAP). Individual browsers report their behaviour to a data aggregation server, which in turn reports aggregate data to an advertiser's server using differential privacy. But the aggregation server still knows the behavior of individual browsers, so basically it's a semantic trick to claim the advertiser can't infer the behaviour of individual users by defining part of the advertising network to not be the advertiser.

!chuds if you're not using Brave you don't belong in this ping group

- 33

- 86

The breach, which is yet to be verified, allegedly contains a complete copy of the company's Slack communications used by their development team including messages, files, and other data exchanged within the Slack workspace.

The hackers further claim the dump includes "almost 10,000 channels, every message and file possible, unreleased projects, raw images, code, logins, links to internal API/web pages, and more!"

NullBulge also used X (formerly Twitter) to announce the alleged hack, stating, "Disney has had their entire dev Slack dumped. 1.1 TiB of files and chat messages. Anything we could get our hands on, we downloaded and packaged up. Want to see what goes on behind the doors? Go grab it."

Now's a really good time for making some fake Disney bait and claiming it's from this leak.

Make some Slack messages about how Disney is planning to remake the original star wars but race/gender swapped "for modern audiences" or something.

- 82

- 103

FAQ

What is this nerdshit

NixOS is a Linux package manager that is very cool.

What are they upset over

NixOS recently had a schism over war proffering and wokeism as talked about in my prior posts, please see those

https://rdrama.net/h/slackernews/post/265018/marseytrain2-compares-the-fall-of-the

The Centrists

It's wild how quick this has evolved. Just goes to show how much bad organization can impact a project negatively, both by bureaucracy and by scaring away contributors.

Whether you agree with the "politics" behind Lix or not, I am really happy that they got around to fixing a lot of long-standing issues with CppNix (Meson as a build system, lambda docs in repl, …).

The team behind it seems both highly motivated and competent, so congrats!

Generally, the so-called political discussions are coming from a place where talented people (who happen to be in marginalized groups) want to feel like they belong in a community, and that their efforts are rewarded. The people who only want to get in the way are very much the exceptions.

It's awesome to see development on the nix codebase that puts its users and community first instead of steamrolling features some company wants.

Discrimination Sneed

Oh it's that project. It's wonderful that they seem to be doing well on technical merits, but their exclusionary language in their community guidelines gives me the ick.

What exactly are you referring to? Their community standards page looks pretty great to me at first glance: https://lix.systems/community-standards/

Edit: I have now read it more thoroughly, I still think it looks great :)

"If you are of a less-marginalised background, keep in mind that you are a guest in our spaces but are nonetheless welcome."

I'm not pro discrimination of anyone for unchangeable reasons, I don't care if you claim to be from a "marginalized background". They are explicitly creating a community for some nebulous, subjectively defined group of people that will inevitably be abused sooner or later.

To be clear, I think they should be able to create a community like this and I don't have a problem with people participating. It's just definitely not for me.

"To be clear, I think they should be able to create a community like this and I don't have a problem with people participating. It's just definitely not for me."

(Lix dev) I think that's totally valid.

That being said, it does say "you are a guest", and if we abused our guests that would be pretty darn inexcusable. Big difference between asking a guest not to put their feet up on the table vs just treating them like shit, y'know?

Sure, but I think you can achieve the same thing by saying discrimination of any kind is unwelcome and subject to moderation (and being transparent about moderation decisions). You can even go into explicit details about what is absolutely unwelcome.

By having that type of language you explicitly create an in-group and out-group dynamic based on discrimination of "background" (it's ambiguous, which means it's up to arbitrary decisions on what "counts"). Two classes of community member doesn't seem appealing to me, even if by some definitions I personally would be in the "upper" class.

It's not really about discrimination though, it's about who our community is for. Straight people are perfectly welcome in gay bars, but if a straight person goes to a gay bar and doesn't have a good time, well they're just not who that place is for (but lots of straight people do have good times in gay bars). This is really important for marginalized backgrounds because non-marginalized spaces are, well, everywhere else. That's really not the same thing as creating a class hierarchy.

As a person who is in no way marginalized (white cis male etc.), I think what y'all are doing is fantastic. People from marginalized groups building the communities they want to be a part of is exactly what the world needs more of.

I don't think it is possible for a community built and run by non-marginalized people (like me) to be anywhere near as welcoming to marginalized groups of people as one built and run by people who are themselves marginalized.

That's not to say that people (like me) with privilege shouldn't try to improve our own spaces as well though. I believe that adopting and upholding a code of conduct is a good first step. For example, this is the one for the open source project/community I run: https://codeberg.org/river/river/src/branch/master/CODE_OF_CONDUCT.md. I would be curious about any ideas you might have for how I could further improve things for my community!

(I guess a more radical, less cookie-cutter Code of Conduct could be a good next step, I did already vastly broaden the scope of the contributor covenant I based it on.)

(Cont.)

Sure, but that is besides my point. I think it's fine (necessary, even) to create spaces for people with commonality. I've been to gay bars/clubs and had a good time, but I don't go to gay bars expecting to meet straight people much how I don't go to technical projects expecting to talk about my sexual preferences or vent about being discriminated against because of the historical genocide and socioeconomic suppression of my family. It's one thing to have values and instill that into a culture, it's an entirely different thing create and codify discrimination by saying who is part of the community and who is (implicitly) not.

If that's what you want to do, fine, but it will be alienating to otherwise reasonable people, not just the buttholes you really don't want in your community.

Regardless, you have the right to operate how you please, just as I have the right to respectfully criticize your public decisions (and likewise, you criticize mine) in a neutral 3rd-party forum. I also have the right to not participate, and that's fine too!

Yeah, that's fair! Seems like we both reasonably understand the other's position and have reached the logical conclusion of this conversation then 🙂

I feel like you missed the most important part of the comment you're replying to:

"This is really important for marginalized backgrounds because non-marginalized spaces are, well, everywhere else."

To create safe environments for marginalized folks, it is made necessary to explicitly define those boundaries by the societal assumptions that would otherwise be applied about who the space is for (and the behaviour that that invites). That is not a function of marginalized spaces; it is a function of other supposedly-neutral spaces being exclusionary of marginalized folks by default.

In other words: if you don't explicitly define who the space is for, then the default interpretation (conscious or otherwise) is that it's not for marginalized folks, and this invites exclusionary behaviour from participants (who might not even realize that what they see as 'normal' is actually exclusionary!). It's not really a choice; either you push back against this explicitly, or you just don't have a space for marginalized folks.

There's only one nebulously-defined 'set of people' with the power to change that dynamic, and it's not the marginalized folks creating these spaces.

Rightoid Sneed

"We do not tolerate peddling right-wing ideology, including but not limited to fascism, denying discrimination exists, and other such things."

While I am against discrimination, fascism, and other such things, I am for lower taxes, and free markets. Making "right-wing ideology" a boogeyman while not similarly calling out ML-style ideologies as well seems a little off-putting. That said, I am not even tangentially related to the project or a user. So, I don't particularly care.

You care enough to click on this post and make comments about it, don't be dishonest.

That being said, as a project we've made our decisions and are quite happy with them. Considering the progress compared to CppNix and the technical focus of the community spaces, I do not think we have made the wrong choice. Indeed there is fewer politics than there are in supposed "we don't care about politics" projects.

Indeed there is fewer politics than there are in supposed "we don't care about politics" projects.

That's not really surprising, is it? Politics only comes up when there are political disagreements. Lix was formed by a team with common politics, so it's not politically diverse enough to have anything worth talking about.

Maybe in the future, Lix will have internal drama about, idk, whether to accept a sponsorship from an agricultural company that promotes GMO crops, and there will be enough different views among the Lix team for there to be arguments.

Conflicts are not just a product of disagreement, but of culture. While I'm sure that there will be disagreements among the Lix team, including political ones, so far I've observed a much healthier (even if imperfect) culture in how they are dealt with, which prevents them becoming conflicts in the first place.

The problem in the Nix project isn't really that there are disagreements, or even political disagreements. It's that a small but influential part of the community has no way to deal with them besides "yelling louder than the other guy", and does not consider respectful treatment of others a baseline shared value. That's why every little disagreement keeps turning into a shouting match.

Really all you need is a healthy culture of conflict resolution. With that you will weather disagreements, without it your community will fall apart even if everybody nominally agrees on the big points.

Conflicts are not just a product of disagreement, but of culture.

Right, and the Lix team has much less cultural diversity than Nix. It's selected from people who had a common reaction to the Nix conflicts. It's easier to maintain norms of respectful behavior when there's more uniformity as to what those norms are or should be. That's what the problem was in Nix: not that certain people disagreed with "respectful treatment of others", but that people disagreed over what that respect consisted of.

Lix explicitly embraces a narrower community spectrum, so I wouldn't be surprised if it never sees the scale of conflict that Nix did.

(Contd.)

"Lix was formed by a team with common politics, so it's not politically diverse enough to have anything worth talking about."

Ah yes, niche left-wing groups are famous for all agreeing with each other and never having huge arguments about tiny differences in ideology. /s

Sudden...Muzzie Sneed?

Yeah, this is disappointing.

The ironic part is that the straw that broke the camel's back on this was the acceptance of a sponsor who is aiding and abetting in a genocide of people who, statistically, would be considered very right wing.

Many leftists are also justifying the genocide on exactly that basis of 'civilising' the Palestinians. Or smearing leftists who support Palestinian rights as anti-semitic, and therefore not 'truly left-wing'.

The whole idea of left-wing and right-wing are, as @ngp pointed out, so nebulous, how can anyone think it's a good idea to exclude people based on such labels?

Yet, as a marginalised group, you wouldn't dare exclude Muslims, despite their beliefs being far more strictly defined, and the boundaries being far more against your cherished values than any liberal right winger could dare to be.

We Muslims are not stupid. If you can't accept someone who's right wing, your acceptance of Muslims is skin-deep; given that Islam is a religion, not a race, that makes your acceptance not just shallow, but fake.

A very dirty game is being played here.

"Many leftists are also justifying the genocide on exactly that basis of 'civilising' the Palestinians. Or smearing leftists who support Palestinian rights as anti-semitic, and therefore not 'truly left-wing'."

This is not real outside of some fringe groups. Certainly not "many" (and I only very hesitantly call myself a leftist). To be clear, the genocide of Palestinians is an atrocity, and their beliefs are irrelevant to this.

"Yet, as a marginalised group, you wouldn't dare exclude Muslims, despite their beliefs being far more strictly defined, and the boundaries being far more against your cherished values than any liberal right winger could dare to be."

Obviously, excluding members of any particular religion just on the basis of religion is bad. But this really does not consider the massive diversity of thought within Islam, or the fact that many Muslims are only culturally so, or the fact that many Muslims are themselves from marginalized backgrounds (gay, trans etc). This also doesn't consider that most other religions also have fanatical right-wing believers – as someone from a Hindu background (now atheist) I'm acutely aware of this.

But more to your point: in my communities I wouldn't welcome right-wing members either, regardless of whether their beliefs are due to religion or something else.

"This is not real outside of some fringe groups. Certainly not "many" (and I only very hesitantly call myself a leftist)."

Fair. But left-wing beliefs are being used this way. And we're also seeing a lot of major right-wing figures like Tucker Carlson speaking out against the genocide. Candace Owens even lost her job over her stand.

It's just sickening to me that, instead of recognising that people across the political spectrum are calling for and standing against genocide, they decide to act as if being right-wing is automatically "fascistic" (i.e. associating them with a genocidal movement) and being left-wing is automatically being against such evil things.

"To be clear, the genocide of Palestinians is an atrocity, and their beliefs are irrelevant to this."

Agreed, I have no problem saying that there's nothing incongruent about being both against Islam and against the genocide of Muslims, that's not what I'm pointing out here here.

"Obviously, excluding members of any particular religion just on the basis of religion is bad. But this really does not consider the massive diversity of thought within Islam"

There's plenty of diversity of thought, but certainly all the four Sunni schools of thought, which account for 85% or more of the world's Muslims, are squarely against many left-wing values. Not that Islam could be described as right-wing either, or even agreeing on everything that left-wing and right-wing secular liberal peoples all agree on, it's its own thing entirely.

I don't think Shia Islam is much different there.

"or the fact that many Muslims are only culturally so"

Cultural Muslims are not Muslims. No sane interpretation of how Islam defines Muslims would count cultural Muslims as Muslim.

"or the fact that many Muslims are themselves from marginalized backgrounds (gay, trans etc)."

That's not accepting Muslims, that's accepting gay and trans people. Feel free to reject Muslims and accept LGBT people, that's your prerogative, but don't claim to be accepting Muslims when you're not.

"This also doesn't consider that most other religions also have fanatical right-wing believers – as someone from a Hindu background (now atheist) I'm acutely aware of this."

Other religions could be 10x more extremely right-wing than Islam, that's not relevant to my point about Islam. I'm focusing on Islam because I'm a Muslim.

"But more to your point: in my communities I wouldn't welcome right-wing members either, regardless of whether their beliefs are due to religion or something else."

That's fine, I respect your right to accept and reject who you like, but don't claim to be accepting Muslims yet rejecting right-wing beliefs, because many beliefs of Islam would fall under a right-wing label (regardless of other beliefs falling under a left-wing label).

I reject the use of the term Muslim to mean someone who grew up in an Islamic culture, or a culture shaped by actual Muslims. I'll certainly express my displeasure at that anytime I see that. I will make it awkward at every turn that a left-wing person tries to claim they are accepting Muslims when they are not.

You who try to hollow out Muslims from the inside and define it by your boundaries instead of letting it define itself pretend to be accepting of Muslims, yet fuelled by the same superiority complex, trying to civilise the natives, you emphatically do not accept Muslims.

Again, we don't care for your acceptance, but don't try to redefine the words Islam and Muslims, and especially don't do that to paint an image that you are accepting when you're not.

"Cultural Muslims are not Muslims. No sane interpretation of how Islam defines Muslims would count cultural Muslims as Muslim."

I think that to be in a particular religion, it is enough to sincerely self-identify as such. I don't think you, or anyone else, have any say in whether someone really is Muslim or any other religion. Some of the cultural Muslims I know have a general belief in the Islamic interpretation of God but don't believe in daily prayers, hijab, or abstention from alcohol. They're not any less or more Muslim than anyone else, regardless of what you might say.

"That's not accepting Muslims, that's accepting gay and trans people. Feel free to reject Muslims and accept LGBT people, that's your prerogative, but don't claim to be accepting Muslims when you're not."

This has "we're not a democracy, we're a republic" non-sequitur vibes.

"I think that to be in a particular religion, it is enough to sincerely self-identify as such."

Words have meanings. As Muslims, not only are our scriptures preserved, so is the classical Arabic language. Even in modern Arabic and other languages, including English, our usage of the term Muslim and other terms used in a religious context have remained quite static.

You can mean what you want when you say Muslim, but as Muslims — as defined by the scriptures — what we mean by it is very specific, so even if you want to go the social construction route, then know that we, the majority, have, across a wide range of opinions, agreed-upon red lines about the meanings of the words.

I have enough respect for other belief systems to not be flippant with how I use the word that defines their adherents, even those that don't have a central agreed upon text. You may not do that, and I can't force you to use our definition, but at least have the intellectual honesty to know that claiming to accept Muslims is simply empty words if you only mean by that accepting people who self-identify as Muslims in a way that doesn't go against your ideological red lines, even if that means completely rejecting Muslims as actually defined by the scriptures they believe in, and furthermore, who constitute the bulk of even self-identified Muslims.

Know that your hollowing out of the meaning of the word Muslim, removing its actual depth from it, is not appreciated. Know that the bulk of Muslims even as defined by self-identification are not accepted by you, and know that Muslims as defined by what the average Muslim actually means by the term, or as defined by any sane interpretation of how Islam defines Muslims are not accepted by you.

This word is the name of our religion, and to redefine it so carelessly might be something you're willing to do as someone who doesn't believe in it, but surely you can appreciate how disingenuous it appears to adherents of a belief system when they see others identify with that belief system yet going against its basic precepts as agreed on by the interpretation of the vast majority of its adherents, and almost the entirety of its scholars across a wide swathe of diversity of opinion? You'd never accept this for a belief system that you hold dear, even a non-religious one.

"They're not any less or more Muslim than anyone else, regardless of what you might say."

It's not about what I say. My words have no weight except insofar as they are true. I doubt you've even done any reading on how Islam defines itself and how it defines a Muslim.

The frustrating thing is that it's not even that we disagree. If that self-identified 'Muslim' and I disagreed on the boundaries of the term, and he had his reasoning for his interpretation of the scripture's definition of the term, I can respect that. But to simply say that anyone who identifies as a Muslim is a Muslim is just complete apathy about the meanings of words; it's disingenuous.

"This has "we're not a democracy, we're a republic" non-sequitur vibes."

If you accept LGBT non-Muslims

and LGBT "Muslims" who don't have the beliefs of any sane interpretation of how Islam defines a Muslim

and you don't accept LGBT Muslims who actually disagree with the morality of LGBT s*x acts

nor non-LGBT Muslims who disagree with the morality of LGBT s*x acts,

then you don't accept Muslims, you accept LGBT people.

Different sperging

To quote the Lix team:

"We do not tolerate peddling right-wing ideology, including but not limited to fascism, denying discrimination exists, and other such things."

You can be Muslim and believe whatever you want to believe. You can follow any madhhab or none at all, but avoid peddling ideological beliefs that treat one group as less worthy than another (e.g. treating other > Muslims better than ahl al-kitāb better than pagans).

"You can be Muslim and believe whatever you want to believe. You can follow any madhhab or none at all"

Literally all the four major Sunni madhhabs, and most Shia as well, have beliefs which would fall under the label of right-wing.

You cannot be Muslim and believe whatever you want to believe. Not only is it definitionally the case that all Muslims, even madhhab-less share certain baseline beliefs, it's also the case that Muslims aren't being >> allowed to have those beliefs (without being excluded).

"avoid peddling ideological beliefs that treat one group as less worthy than another (e.g. treating other Muslims better than ahl al-kitāb better than pagans)."

People who have an anti-racist belief system frequently, maybe even automatically, believe that racists are 'less worthy' (of at least certain treatment) than anti-racists. Nobody finds this objectionable. It's not objectionable.

Nobody complains about how it's unfair to judge people this way because different groups have different upbringings and so on. We understand that the rule is the rule, and the rule is only applied to the individual who wilfully refuses to learn and maybe fulfils other criteria, but that individuals can be understood, reached out to, treated well, and so on.

The racist being referred to is the Platonic racist, so to speak, who is wilfully racist despite having had the error of their way explained to them in a manner they could easily understand, if they didn't have arrogance and other evil desires clouding their judgment.

Funnily enough, Muslims are far more accepting of religious difference, despite in our belief systems disbelief (by wilful ignorance or other moral failings) being the greatest sin, greater than serial murder or, yes, genocide, than anti-racists are of racists, for example; as long as the other person is respectful and civil, we see no reason to treat them disrespectfully.

That's because we have the notion of God being the only all-knowing judge of a person's heart, and people who we may have considered disbelievers in this life may end up having an accepted excuse on the day of judgment.

In that sense, when the Qur'an talks about "disbelievers", there are many signs in the book that it's talking about those who have no excuse on the day, not those who are simply misled about Islam and didn't have an opportunity to learn or the like. The senile, the child, the madman, these are some of the types of people not held to account. People are not punished without sending a messenger.

I don't know if people here are actually interested in learning how a Muslim views the world, and I didn't mean to go into an in-depth rational defence of the mainstream theology of Islam here, but I had to respond to that point.

People have a twisted (double standard) take when it comes to believers believing in the moral superiority of (true) believers. (True) belief is an important indicator of a person's character or their heart, and we understand this principle outside of religion, such as when it comes to racism, but we lose this understanding when it comes to religion.

Anyway, my point isn't to complain that these people reject (normative) Muslims and have unfair beliefs about us; if they view us as morally inferior people, that's their belief, and I can understand why they believe what they believe.

The propaganda against religion, especially Islam, is strong, and sometimes people project logical or moral failings of their religion onto all religions (or in throwing out the bathwater (of specific religions that aren't Islam 😉), end up throwing out the baby of those religions that Islam might agree with those religions on but has gained a bad rep due to propaganda against religion in general).

So I understand why people reject Islam and don't want to allow Muslims into their group. I'm not per se complaining about that.

My only point is don't pretend to be accepting when you're not, don't redefine Islam and Muslim to project an image of yourself as accepting of Islam and Muslims when you're not. You don't accept us, let's just be honest about it and move on.

Edited to add: the reason this kind of thing upsets me so much is because part of the dehumanisation of Muslims that allows the liberal world to justify its adventures in the Muslim world is the painting of traditional Muslim beliefs as barbaric or backwards or irrational, mysterious, and hard to understand for civilised people somehow.

And while I can understand how someone might come to that conclusion with all the messaging out there, I'm happy to patiently explain things in a logical way and have conversations with people if they'll allow me, or to go our separate ways if they don't.

But if someone projects a false notion that they're accepting of Muslims, I have to counter that.

I'm sorry to respond with such a brief reply, but this misses the point. I am a Buddhist, I understand how religious beliefs work and I understand the Islamic view of the world (I've stumped my fair share of da'wah street preachers). It's not relevant. You can believe anything, whether you want to or not. Just don't peddle, push or act in a "right-wing" way when engaging with the Lix project community spaces. That's the idea.

!neolibs bait

This is a common misconception. Free markets are probably inefficient regardless of levels of individual inequality. A preference for lower taxes is fundamentally anarchist, not right- or left-wing. Those two beliefs are not being excluded here.

Examples of "other such" "right-wing ideolog[ies]" which would "not [be] tolerate[d]" include monarchism, religious fundamentalism, corporatism; and various reactionary beliefs oriented around being anti-labor, anti-liberal, anti-justice, or anti-science. I think that many lower-l libertarians insult themselves by calling themselves right-wing and not realizing that it comes from ideologies which defend putting a king and church above everybody else.

Final Kicker of Diversity

Yeah, and check out their core team: https://lix.systems/team/

Well, people like to form organizations with likeminded people. Best of luck to them and hopefully this doesn't fragment the already not huge Nix ecosystem too much. Their attempts to rewrite parts of cppnix on rust seems like a fine one, interesting to see if they can pull that off. Personally, I feel like I missed the C++ train, but Rust I can get.

It's all ""she/her" and ""they/them"

- 9

- 28

The real project 2025 was furry fetish fic all along  - article text follows

- article text follows

SELF-DESCRIBED "GAY FURRY hackers" breached the Heritage Foundation in a cyberattack on July 2, and on Tuesday released two gigabytes of the conservative think tank's internal data. Now an executive director at the influential organization is so hopping mad that he might as well invest in a kangaroo costume.

The hacktivist collective, SiegedSec, has been engaged in a campaign called "OpTransRights," in which it targets government websites with the aim of disrupting efforts to enact or enforce anti-trans and anti-abortion laws. Heritage Foundation was selected due to its Project 2025 plans, seen as a blueprint for Donald Trump to reshape the U.S. with sweeping far-right reforms should he win another term as president, SiegedSec told CyberScoop on Tuesday. Group member "vio" informed the outlet that they aimed to provide "transparency to the public regarding who exactly is supporting" Heritage, and that the leaked data included "full names, email addresses, passwords, and usernames" of individuals linked to the nonprofit.

This material, as the Daily Dot reported, appears to have come from the Daily Signal, Heritage's media and commentary site, which lists one Mike Howell as an investigative columnist. The former Trump administration official in the Department of Homeland Security is also the executive director of Heritage's Oversight Project, an initiative focused on border security, elections, and countering the "influence" of the Communist Party of China. It was Howell who contacted SiegedSec in the wake of the breach to get answers about their motivations — and as he continued to message "vio," his texts grew more unhinged and threatening.

After declining to talk with Howell by phone, vio described what it was that they and their hacker furry comrades sought to accomplish: "[W]e want to make a message and shine light on who exactly supports the [H]eritage foundation," they wrote. "[W]e [don't] want anything more than that, not money and not fame. [W]e're strongly against Project 2025 and everything the [H]eritage foundation stands for." Howell seemed stunned by the explanation. "That's why you hacked us?" he replied. "Just for that?" (Once the full chat log was released by SiegedSec, Howell confirmed to the Daily Dot that it was genuine, and that the conversation had taken place on Wednesday.)

From there, Howell's tone shifted. "We are in the process of identifying and outing [sic] members of your group," he wrote. "Reputations and lives will be destroyed. Closeted Furries will be presented to the world for the degenerate perverts they are." As vio expressed skepticism that anyone in SiegedSec would be identified and continued to criticize the Heritage agenda as harmful to human rights, Howell invoked Biblical authority and seethed that the hackers had "turned against nature."

"God created nature, and nature's laws are vicious. It is why you have to put on a perverted animal costume to satisfy your sexual deviances," Howell wrote. "Are you aware that you won't be able to wear a furry tiger costume when you're getting pounded in the butt in the federal prison I put you in next year?" When vio taunted the executive for this outburst and hinted that they would be posting the conversation online, Howell replied, "Please share widely. I hope the word spreads as fast as the STDs do in your degenerate furry community."

He went on to liken furry culture to bestiality, which he called a "sin," prompting vio to ask him, "whats ur opinion on vore." (Vorarephilia, or vore, is a fetish typically expressed in erotic art of people or creatures eating one another.) A Twitter user shared a screenshot of this exchange Wednesday afternoon, leading Howell to quote-tweet the post with lyrics from rapper Eminem's 2000 single "The Way I Am."

Hours later, Howell learned through the Daily Dot's reporting that vio had decided to try to quit their life of cybercrime, and that the rest of the collective agreed it was "time to let SiegedSec rest for good," in part to avoid FBI attention. "COMPLETE AND TOTAL VICTORY," Howell tweeted. "I have forced the Gay Furry Hackers to DISBAND."

But it remains to be seen whether these hackers — who last year managed to breach NATO systems as well as a major U.S. nuclear lab that they demanded begin research on "creating IRL catgirls" — will truly disappear into the shadows. Like an empowering fursona, hacking can be an identity that's hard to give up. Before he congratulates himself any more, Howell might want to at least change his passwords.

- 32

- 46

Long but entertaining read. Got this from Blind where techcels are complaining that they are tired of suits pushing AI for everything at their jobs.

- 64

- 71

Boeing Sent Two Astronauts Into Space. Now It Needs to Get Them Home.

Helium leaks and thruster problems prompt NASA and Boeing to delay astronauts' return on company's Starliner vehicle

Boeing succeeded in getting NASA astronauts to the International Space Station, following weeks of delays. Returning them to Earth on the same spacecraft is proving another challenge.

- 1

- 12

Update: We can now confirm the funds have been returned (minus a small amount lost to fees). https://t.co/cHkjPt3m2A

— Nick Percoco (@c7five) June 20, 2024

they thought they were being tough and cool, but then they folded like little bitches under pressure.

I can cover the Baron Trump meme coin fiasco that is unfolding.

Unique Badge!

Unique Badge!

to solve this Cipher! First

to solve this Cipher! First

Drone striking kids is hot

Drone striking kids is hot

from contributing to freedesktop : linux

from contributing to freedesktop : linux  | Man fails to respect

| Man fails to respect  the CoC and gets banne by loser

the CoC and gets banne by loser  wokescold with an email job

wokescold with an email job

Reddit blocks all search engines except for Google

Reddit blocks all search engines except for Google

Lobsters

Lobsters  posters try their hardest not to bring up politics

posters try their hardest not to bring up politics  Rightoids start noticing

Rightoids start noticing  the "Code of Conduct" says "not marginalized people are 'only guests' in the community". Muslim

the "Code of Conduct" says "not marginalized people are 'only guests' in the community". Muslim  then begins longposting

then begins longposting  with leftoids

with leftoids  that Muslims would NOT be Welcome

that Muslims would NOT be Welcome

"Are you aware that you won't be able to wear a

"Are you aware that you won't be able to wear a  furry tiger costume

furry tiger costume  when you're getting pounded

when you're getting pounded  in the butt in the federal prison

in the butt in the federal prison  I put you in next year?" - Turbochud Executive Director of the Heritage Foundation

I put you in next year?" - Turbochud Executive Director of the Heritage Foundation

in an amazing

in an amazing  feat sent two astronauts

feat sent two astronauts  to space and forgot

to space and forgot  to plan how to get them down from the space station

to plan how to get them down from the space station

asf audit company CertiK unleashes all 'whitehatted'

asf audit company CertiK unleashes all 'whitehatted'  funds

funds  exchange.

exchange.